It’s true: Five is a ridiculous number for summing up a decade’s worth of video games. It’s far too small. One can’t even put together an already-insufficient list of one game per year with that little real estate. You should know, then, that this is a different kind of list.

This isn’t a list of highlights. It’s not here to remind you of monumental shifts, or of astonishing lows, or wonderful surprises. Instead, its aim is to tell a story. One story, occurring alongside and intersecting with many. Again, it’s not the only one. But it’s one we shouldn’t forget.

To tell it, we only need five games, with a sixth to carry us into the next 10 years.

I. Minecraft

The story of any decade always starts in the twilight of the previous one. Minecraft was first made available to the public in the early morning hours of May 17, 2009, by its then-sole creator, Markus “Notch” Persson. That version of Minecraft was a development build; a work-in-progress for curious forum users to toy with while Persson worked at turning it into a proper game.

An alpha build of Minecraft would soon go on sale as Persson formed a development studio, Mojang, to tend to the game, but it would be two years until the game was designated a “full release” on November 18, 2011. Development on Minecraft would never really end—to this day, the game is still updated by the team put together by Microsoft, Minecraft’s owners since the fall of 2014. The most recent patch, dubbed the Bedrock addition, will bring full crossplay to Minecraft players, letting users on all platforms play together.

The ensuing decade would be defined by Minecraft, both directly and indirectly. Its unusual business model, where players were welcomed to buy access to a game while it was unfinished and actively being worked on, is now a viable release strategy for indie developers. Its regular, cadence of post-release support, mostly seen earlier in massively multiplayer online games, would slowly be replicated across the industry as connected consoles matured in the current hardware generation. This, coupled with the open-ended unstructured play Minecraft was designed for, was a quiet forbear of massive change. Minecraft led the shift in the industry’s attention economy more toward the players of games than their creators, walled away behind layers of public relations and carefully-plotted marketing reveals. It’s one of the reasons why Kotaku’s six-year-old decision to embed in game communities was a viable one still practiced here today.

Minecraft’s massive success heralded a stark generational divide in video game players, becoming, as Kotaku editor-in-chief Stephen Totilo would put it, “this generation’s Super Mario Bros.” That generation would be the one that would flock to YouTube and YouTubers, become “content creators” that made their own games and videos within Minecraft, and build a whole entertainment ecosystem entirely different from what we had seen before, one that we are only just now starting to understand.

Finally, there’s Persson himself. The developer made $2.5 billion in Microsoft’s purchase of Mojang, a sale that turned the heads of business reporters and signalled his exit from the wider community of games and game developers. His behavior in later years—mostly in the realm of misogynist attacks and transphobic statements from his Twitter account—slowly led to his ostracization from gaming’s most public spheres. Finally, in April, Microsoft declined to invite Persson to Minecraft’s tenth anniversary celebration, heretofore the most public effort to divorce Minecraft from the now-troublesome man who created it.

Maybe money soured Persson, maybe he was always this way. What he is now is an inconvenient truth, a living reminder that even in their moments of greatest success, the games industry is more likely to brush aside its most toxic elements rather than confront them.

II. Mass Effect 3

Ten years ago, game designer Will Wright posited that a game is played for the first time not when someone gets it in their hands, but when they first hear about it. This perceived game, Wright argued, is the bar that the designer must reach or exceed. Fall short of this “low-res” mental ideal, and the player will be disappointed.

When 2012’s Mass Effect 3 is brought up today, its ending is often the reason why. As the conclusion to a beloved trilogy of science-fiction games, the pressure to finish well was bad enough based on the acclaim of the previous two games alone. But Mass Effect had another problem—from the very first game, released in 2007, Mass Effect was marketed in a way that stressed that players’ choices would matter. Decisions made in each game would affect how that game’s story would unfold, with the biggest choices carrying forward into future games.

The pitch worked too well. The Mass Effect trilogy’s centering of player choice, both in marketing and in game design, proved intoxicating. Entire species lived or died, governments rose or fell, and crewmates whom players spent dozens, if not hundreds, of hours with found hope or despair. So when it came time to end things, and the illusion of infinite choices had to be collapsed to a few definitive endings, some players found the small set of possibilities unacceptable. The fantasies of video games have limits, but the fantasies of marketing do not.

Some argued, reasonably, that the game’s final moments were inconsistent with the ethos of the trilogy’s game design. Many more argued loudly that the ending was simply insufficient, a shallow reward for five years of investment in a character—a complaint that overemphasized the final 15 minutes of a very long game. Ultimately, those fans won out, and anunderwhelming extended ending was released.

Go back and read any gaming outlet’s account of the saga, and you’ll see everything described with a healthy layer of euphemism. There’s “fan outcry,” the ending is “controversial,” the complaints of fans and the developers who receive them describe the exchanges as “dialog.” We’ve been around this block enough times now to call a spade a spade: Fans were pissed, and they were rude and loud about the video game not being what they thought it would be. Those fans certainly don’t speak for all fans, but few spent any of the last 10 years writing stories about respectful fans quietly registering their discontent, and too often has the silent majority been invoked to launder the reputation of video game malcontents.

The phrase player choice is a bit of an oxymoron—video games, by definition, mostly comprise choices the player must make: jump here or there, shoot that alien first or another, move now or hide. “Player choice,” then, is arguably an invention of marketing, one that’s meant to signal that the parameters of those choices have been widened to areas traditionally thought of as authored—usually, this means a game’s story. But like those old player choices of where to jump and shoot, the newer choices have hard parameters. Constraints that are easy to accept if you are Mario choosing one path over another become harder to grok in a narrative determined to woo you into believing your choices matter in a way that transfers authorship to you.

The truth is in the design, the spin is in its limits. To a certain extent, yes, you decide if the Krogan have a future, but you can’t not face Sovereign. You have some choices in Mass Effect, just like you have in The Witcher, The Outer Worlds, or any number of games. But you don’t have every choice.

Mass Effect is video games’ own sword of Damocles finally coming loose. A franchise that emphasized choice so much that when its final act made a decision players did not care for, they chose not only to reject it but to demand it be changed.

The game that existed in fan’s heads was more important than the game that actually got made, setting a pattern that would repeat itself with alarming regularity. It would repeat itself with Bloodstained: Ritual of the Night, with No Man’s Sky, with Fallout 76, with every game that had a “backlash.” We’ve never really stopped writing about the games fans imagine.

III. Tales From The Borderlands

The collapse of Telltale Games was a shock to everyone. The studio’s closure in the fall of 2018was sudden and swift, with 250 people who reported to work on Friday, September 21 discovering that afternoon that they now had no job, no severance, and nine days of insurance coverage left.

It was a tragic end to a studio that had endured long cycles of crunch and tumult, as a years-long effort to recapture the success of its biggest critical hit, 2012’s The Walking Dead, was met with diminishing returns.

Over the last decade, we’ve become well-acquainted with the human cost of making video games. Crunch became a regular topic of discussion, and not an open industry secret. The oppressive, extreme labor required by modern live service games like Fortnite, or by top-tier marquee releases like Red Dead Redemption 2, were recounted in detail by reporters at Kotaku and elsewhere. Video games were destroying the people who made them.

One way to read Tales from the Borderlands, Telltale’s most unlikely project, is as a work of protest. The game’s story, about a disgruntled office drone who concocts a scheme with his best friend to screw over their oppressive employers and strike it rich, was developed at a time when reports indicated toxic management had just begun to take its toll on studio employees.

Released in five episodes between October 2014 and November 2015, Tales arrived as the studio had begun to dramatically increase its output in a way that would ultimately prove unsustainable, with a wave of layoffs in late 2017 presaging the studio’s 2018 demise. In a 2017 oral history, the developers characterized the game as a pipe dream, internally considered unlikely to succeed—thinking that led to it nearly being cancelled midway through the season after much turmoil and staff turnover. According to Nick Herman, one of the game’s directors, the only reason Tales was completed was because a skeleton crew agreed to stay on, volunteering to complete the back half of the series after 95% of the team was reassigned.

The tragedy of Telltale Games isn’t that it’s a different story from other high-profile stories of developer crunch and burnout, it’s that it’s the same story: Aggressive release schedules created at the behest of money people who disregard the health and well-being of developers, exploited for their “passion.” The biggest blow, however, was that, to the outside observer, Telltale’s house style of episodic video games seemed so sustainable, an answer to a question the industry desperately needs to solve.

IV. Broken Age

In 2016, one of the most arresting statistics in video games made the rounds: Nearly 40% of all video games on Steam, then the only real game in town for digital PC game distribution, had been released in that year alone. Video games, long a demanding medium to keep abreast of, now had hard data to confirm what many had felt: There was just no way for anyone to ever keep up with it all.

For most of its existence, Double Fine Productions was technically an independent studio, although the designation doesn’t feel fair compared to one or two-person teams chipping away at games in the hopes of becoming the next Stardew Valley. Founded by Tim Schafer, one of the few well-known personalities in video games, the studio started life with major publisher support, releasing Psychonauts and Brutal Legend in the 2000s—games that failed to meet financial expectations and were met with uneven critical reception but went on to become cult classics.

Double Fine began the decade arguably a failure, and in a bold reinvention, began to produce small-scale games developed by small internal teams, a video game developer version of Moneyball. Then the studio made history, launching a record-breaking Kickstarter in 2012 to crowdfund a graphic adventure game in the LucasArts style—the kind of game Tim Schafer made his name on.

That game, Broken Age, would be released in two acts in 2014 and 2015, a case study in game development at the peak of the indie game gold rush. Double Fine’s success had opened the floodgates to what was possible with crowdfunding, and without publishers. Seven months later, when Obsidian Entertainment Kickstarted an RPG for $4 million, the message was clear: big indies and little indies, each appealing to the nostalgia of fans missing something in a big-budget landscape that began to prefer the more microtransaction-rich games-as-a-service model.

During all this time, indie games kept flooding the digital marketplace. An “indiepocalypse” was frequently brought up, first in 2016, and again in 2018. The message was the same: there were too many indie games. How could any one game succeed?

Double Fine attempted to provide an answer, using the success of Broken Age to function as a small publisher of other indies. The studio published games that sat comfortably next to its own smaller titles, like MagicalTimeBean’s Escape Goat 2, as well as two games—Mountain and Everything—by acclaimed artist and animator David O’Reilly.

We’d never get to find out how well the Double Fine experiment would have worked. During Microsoft’s E3 media briefing last summer, Tim Schafer appeared on stage to announce that Microsoft had acquired his studio. In an interview with Gamasutra, Schafer stated that Double Fine’s games might be perceived by the public as too big a risk for a $60 purchase, and the security of Microsoft as a publisher and their on-demand Game Pass service would, for now, allow Double Fine to exist as it always had.

Looking back on a decade of high-profilestudio closures, there really is no sure way forward for indies large or small beyond the lighting-in-a-bottle success of a Hollow Knight or Five Nights At Freddy’s. The writing seems to be on the wall in the twilight of this decade, as indie games are absorbed into subscription services like Apple Arcade or Xbox Game Pass, a business model that often bets on becoming a loss leader in order to expand its pool of subscribers, often at the expense of creator profit

In that case, success might be worse than failure, re-organizing the world of indie games into a set of fiefdoms that once again places independent developers at the mercy of massive corporate gatekeepers.

V. Depression Quest

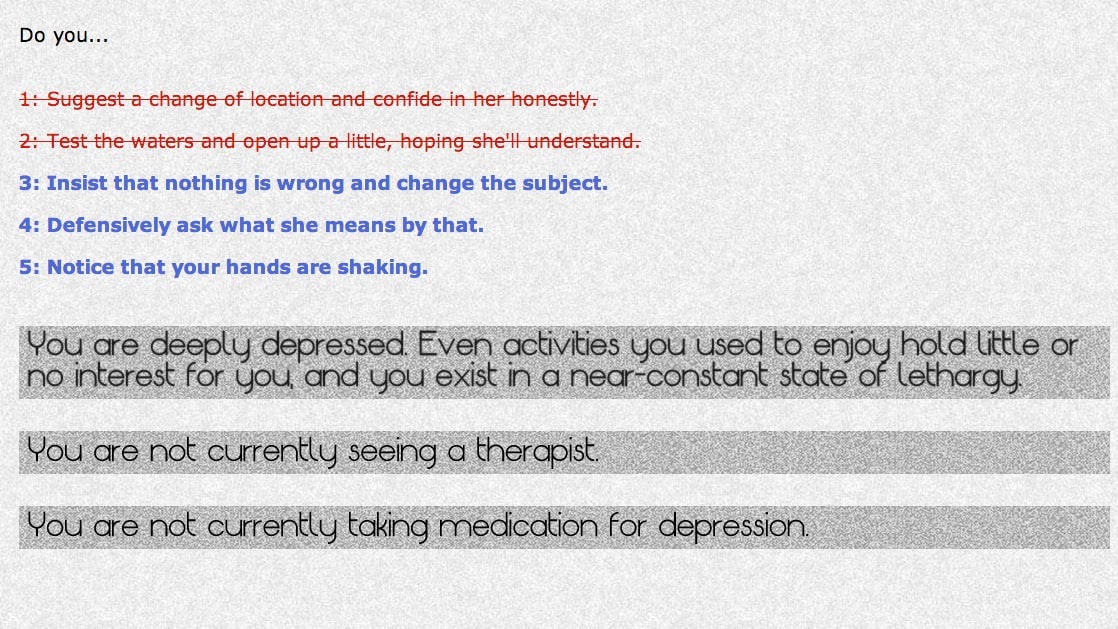

You can still play 2013’s Depression Quest. Zoe Quinn’s narrative game about the experience of living with depression was an early highlight of a burgeoning Twine game scene, a hypertext choose-your-own adventure that gradually deprived the player of choices as the symptoms of chronic depression began to take over.

Depression Quest is not often brought up on its merits as a video game anymore. This is a shame, as it was arguably the beginning of a more nuanced approach to the discussion of mental health in video games, which regularly reduced a character’s mental state to “sanity” meters and often featured villains with the reductive trope of mental illness used to justify their evil.

Instead, Depression Quest is most remembered for being a flashpoint in what became known as Gamergate, a sustained harassment campaign incited by a misogynist blog published in August of 2014 that targeted its developer. Online communities on sites like 4chan and Reddit, platforms where a hands-off approach to moderation allowed the unrestrained and bigoted ids of its more malicious users to flourish, found in this blog’s target a tenuous connection to games journalism. With a series of disingenuous lines of argument, including false assertions that a press outlet (Kotaku) was unethical in its coverage of the game, these communities swept all manner of people into Gamergate’s orbit and created a smokescreen around their harassment of women and reporters.

The resulting confusion made it impossible to talk about Gamergate at the time, and even today, the difficulty persists, having accrued a confounding number of arguments and counter-arguments, the same talking points repeated over and over again as people were harrassed from their homes and out of the industry. In a definitive essay on the subject in October 2014, Deadspin writer Kyle Wagner identified the movement for what it was: the future of the culture wars, a social media-fueled grievance politics that was indifferent to truth and savvy to the media’s default approach to controversy and the easily manipulable flightiness of corporate advertisers

The resulting confusion made it impossible to talk about Gamergate at the time, and even today, the difficulty persists, having accrued a confounding number of arguments and counter-arguments, the same talking points repeated over and over again as people were harrassed from their homes and out of the industry. In a definitive essay on the subject in October 2014, Deadspin writer Kyle Wagner identified the movement for what it was: the future of the culture wars, a social media-fueled grievance politics that was indifferent to truth and savvy to the media’s default approach to controversy and the easily manipulable flightiness of corporate advertisers

Gamergate, as Wagner argued, formed a pattern now embraced by the leaders of the Republican party and the administration of President Donald Trump. Our current political discourse, much like Gamergate, exploits a problem in journalism we now glibly call both-sides-ism, a form of false equivalence where an issue is portrayed as having two equally valid sides when, in fact, there is asymmetry.

So, for example, when decades of scientific knowledge validate the efficacy of vaccines, or the reality of climate change, giving those who refuse to acknowledge those facts unchallenged consideration makes them seem like an equally acceptable counterpoint, and not deviant and harmful behavior. Both-sides-ism is an error made when the performance of objectivity is needed to placate bad-faith actors who will cry foul for being shut out of a conversation they have no real place in to begin with. And so white nationalists are profiled by major outlets, television pundits spout racist rhetoric as a corrective for the “liberal” mainstream, and the straw-man of a government that will forcibly take guns from its citizens is used to inoculate us against any discussion of gun control.

There is chilling power in this ideological staking of ground, one that forces those who dare to stand against it to face a wave of potential harassment for merely speaking up. Consider how difficult it is for games writers to discuss race, how unexamined misogyny allows for industry-wide sexism to flourish, or how anything other than deference to the gamer outrage du jour (like, saythe Epic Games Store) will bring with it a predictable surge of toxicity. Every story of this nature—including this one—is written with this toxic reception in mind, and there is no way of knowing how many are abandoned because the writer has decided it’s just not worth the potential drain on their well-being.

There is chilling power in this ideological staking of ground, one that forces those who dare to stand against it to face a wave of potential harassment for merely speaking up. Consider how difficult it is for games writers to discuss race, how unexamined misogyny allows for industry-wide sexism to flourish, or how anything other than deference to the gamer outrage du jour (like, saythe Epic Games Store) will bring with it a predictable surge of toxicity. Every story of this nature—including this one—is written with this toxic reception in mind, and there is no way of knowing how many are abandoned because the writer has decided it’s just not worth the potential drain on their well-being.

The most troubling aspect of this series of nightmares is that they are not invasive exotics, not outside intrusions of malicious actors wholly uninterested in the future of video games or politics. These are all crises that were, essentially, permitted. For years, games journalism complied with decades of video game marketing that claimed the medium as a masculine space, profiteering startups allowed our online spaces to go unmoderated, for algorithms to game user’s attention and funnel young men into radicalized thought

Together, the makers, players, and the critical establishment of video games, isolated from the wider culture, let toxicity fester, making excuses for it under the guise of “passion.” The horror that now overruns us is one that we invited in.

Coda — Nier: Automata

Nier: Automata is a game about nihilism. In the 2017 game, you play your way through a post-apocalypse with multiple endings, each one bleaker than the last. It gives you a few reasons to hope for a better world, and then strips them away, one by one. At the same time, it shows you the folly of characters who try to avert disaster again and again, only to be met with failure. Annihilation always waits at the end.

In the final moments of Automata’s “Ending E,” the proper, final conclusion of the game, you are presented with an impossible minigame, a bullet hell shooter that you cannot win. As you lose over and over again, the game taunts you, asking if you think games are silly little things, if it’s all pointless, if you accept the assertion that life is meaningless. Refuse to answer yes, and then something remarkable happens: You get help from someone else, another real player, who also refused to back down. Your one ship becomes many, and the impossible becomes possible.

Histories are often arguments, an interpretation of the available facts delivered as responsibly as possible. Histories, like good reportage, help the reader understand the context of the era they examine, and are forthright about the lens they are examining it with. As brief histories go, this one’s a downer by design. It’s one that argues that maybe there’s a reason that, every time a video game is delayed, developers write letters to “fans” with language of supplication, as if they were afraid of what those fans might do. One that argues that maybe, the rampant harassment and misogyny that showed its face plainly over the last decade was always there, because it was permitted to exist. It’s a history written by someone who believes that these problems will continue to persist, because they still haven’t been truly confronted.

Nier: Automata argues that clarity can be found in acknowledging the broken world around us, and the hopelessness that can come when we contemplate the enormity of fixing it. It is frank and cuts no corners, but it also finds faith in this: that if enough of us are willing to look at how the world is broken and how we helped break it, then, maybe, together, we can fix it. Every day, month, year, or decade does not have to be worse than the one before it. We can do the impossible, if we’re willing to look in the mirror.