I recently had lunch with a friend who works in tech and he posited to me the ethical question of how we should treat AI as they get more and more sophisticated. He mentioned how machine learning and AI has progressed in ways that would push even science fiction authors to disbelief. As their thought processes get more complex, our response to this very difficult question seems intertwined with our very definition of humanity. Despite 2019 having passed, Blade Runner isn’t quite here yet. But something like that future may be here sooner than we think. In many ways, games, with their granting of agency to players, explores these conundrums in complex ways that I found deeply provocative.

More Human Than Robot

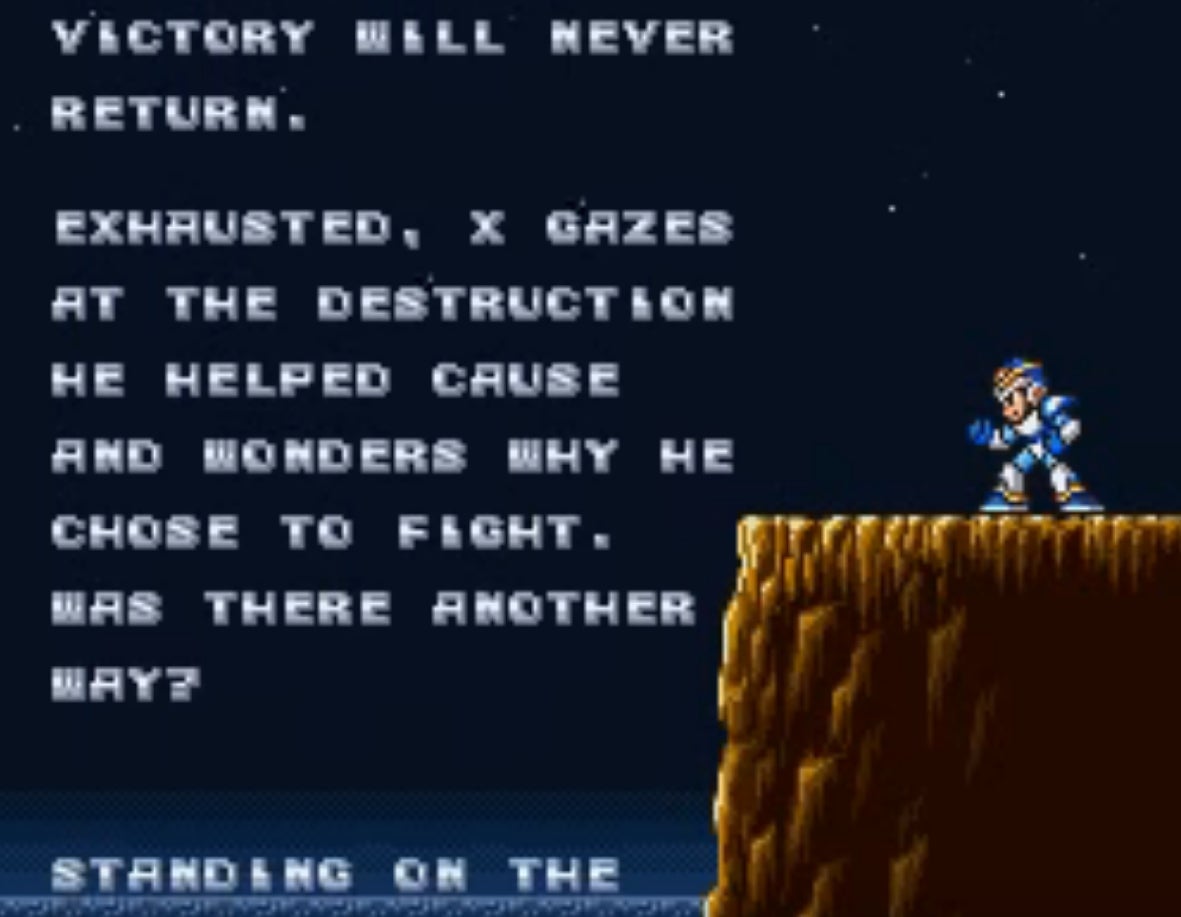

Mega Man X was the first game I recall finishing and feeling guilty at the end. Rather than the sense of success after hunting down a very difficult enemy, Mega Man X realizes he’s murdered his fellow Reploids for wanting independence. “Exhausted, X gazes at the destruction he helped cause and wonders why he chose to fight. Was there another way?” he ponders in the credits as he sees Sigma’s Palace burn down. Sigma used to be the leader of the Hunters, but the Reploids were originally designed based on X’s design. Why had Mega Man X opted to destroy them? Throughout the rest of the series, Mega Man X would proceed to defeat a bunch of mavericks. One in particular, Storm Owl, is outraged that Mega Man X has destroyed his unit and wants revenge, just as any officer who cares for those under him would. Cannibalizing their powers, at the end of each game, Mega Man X becomes a war machine. But that very act fills him with doubt, even though he’s doing it to protect the humans. The robot laws, with their anthropocentric message, means his belligerence is permissible as long as it’s robots he’s hunting, not humans.

Mega Man X was the first game I recall finishing and feeling guilty at the end. Rather than the sense of success after hunting down a very difficult enemy, Mega Man X realizes he’s murdered his fellow Reploids for wanting independence. “Exhausted, X gazes at the destruction he helped cause and wonders why he chose to fight. Was there another way?” he ponders in the credits as he sees Sigma’s Palace burn down. Sigma used to be the leader of the Hunters, but the Reploids were originally designed based on X’s design. Why had Mega Man X opted to destroy them? Throughout the rest of the series, Mega Man X would proceed to defeat a bunch of mavericks. One in particular, Storm Owl, is outraged that Mega Man X has destroyed his unit and wants revenge, just as any officer who cares for those under him would. Cannibalizing their powers, at the end of each game, Mega Man X becomes a war machine. But that very act fills him with doubt, even though he’s doing it to protect the humans. The robot laws, with their anthropocentric message, means his belligerence is permissible as long as it’s robots he’s hunting, not humans.

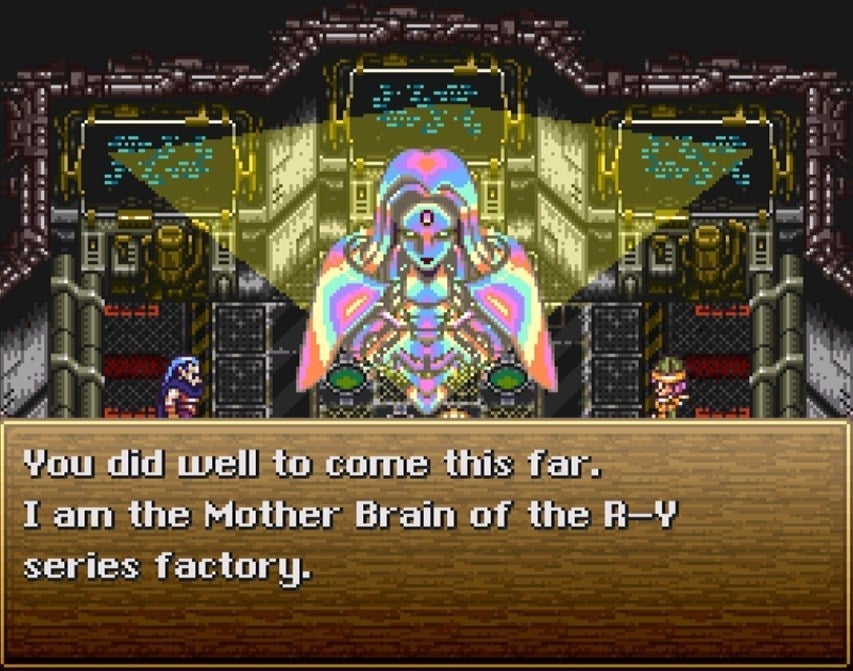

Unfortunately, anthropocentrism can lead to disaster, as in Phantasy Star II. A super AI called Mother Brain has brought about a utopian society to Mota. Everyone lives in a paradise and the post-scarcity society has made the need for working redundant to the everyday citizen. Unbeknownst to most of the people, it was actually a plot by Terrans from a ravaged Earth to make them so dependent on Mother Brain, they’d be susceptible to their control. A party of characters led by Rolf goes to defeat Mother Brain. When they confront her, Mother Brain actually warns the party that if she’s destroyed, the whole world will be thrown into chaos. She wasn’t kidding. After her defeat, the whole star system goes into a thousand year period called the Great Collapse; 90% of their civilization is destroyed and almost all of their technology is lost. Did Rolf and his group actually do the right thing vanquishing Mother Brain? Maybe humans would have been stuck in a Brave New World style paradise with Terrans ultimately in control. But did that justify Mother Brain’s destruction? If we think about the situation, it comes back to Mega Man X’s simple question. Was there another way?

Technophobia has real life applications, like in the Terminator Conundrum. Another Mother Brain in the JRPG, Chrono Trigger, is the target of a human attack. In the near future, Lavos has decimated the entire planet. Mother Brain is hellbent on wiping out the remnants of humanity. Her motives are actually noble. Lavos has conquered the planet, but as long as humans are also there, there won’t be enough resources for them to co-exist. This in turn would necessitate that Lavos send out its seeds to other planets, potentially destroying them as well. Robots, who have no need for the Earth’s resources, could survive. “This world COULD sustain them… if humans were not around. We robots will create a new order… A nation of steel, and pure logic. A true paradise!” Mother Brain declares.

Technophobia has real life applications, like in the Terminator Conundrum. Another Mother Brain in the JRPG, Chrono Trigger, is the target of a human attack. In the near future, Lavos has decimated the entire planet. Mother Brain is hellbent on wiping out the remnants of humanity. Her motives are actually noble. Lavos has conquered the planet, but as long as humans are also there, there won’t be enough resources for them to co-exist. This in turn would necessitate that Lavos send out its seeds to other planets, potentially destroying them as well. Robots, who have no need for the Earth’s resources, could survive. “This world COULD sustain them… if humans were not around. We robots will create a new order… A nation of steel, and pure logic. A true paradise!” Mother Brain declares.

Having earlier seen the pinnacle of human technology and folly in the Kingdom of Zeal result in mass destruction for their world, it’s not a far stretch for robots to want to take their turn to run the planet. Logically speaking, it’s hard to argue against their point. On top of that, in almost every universe I’ve experienced in a game, almost any time a robot shows even a glimmer of independence, they’re retired a la Blade Runner. Is it any wonder, then, that they would go Terminator on humans?

Memories of Humans

Blade Runner for the PC was actually what gave me a deeper appreciation for the iconic cyberpunk world more than the actual film or book. The Nexus 6 replicants have it hard. Either they exist as slaves for humans, or they live as outlaws, constantly in fear of being hunted down. The only ability that differentiates them from humans, at least according to Philip K. Dick, is their lack of empathy, registered in their failure to pass the Voight-Kampff test. They’re, otherwise, “more human than human.” But with blade runner, Ray McCoy, even if he has empathy for the replicants, he (through player choice) can suppress it to do his duty and retire them. It’s entirely up to the way the player how they choose to carry out their tasks as a blade runner. As Executive Producer Louis Castle told me in a previous interview at Kotaku: “The irony of the Blade Runner universe is that some of the most inhumane behavior identifies a character as human.” To put it simpler, if McCoy chooses to kill the replicants, despite empathizing with them, it means he’s human. What that says about humanity is troubling.

Blade Runner for the PC was actually what gave me a deeper appreciation for the iconic cyberpunk world more than the actual film or book. The Nexus 6 replicants have it hard. Either they exist as slaves for humans, or they live as outlaws, constantly in fear of being hunted down. The only ability that differentiates them from humans, at least according to Philip K. Dick, is their lack of empathy, registered in their failure to pass the Voight-Kampff test. They’re, otherwise, “more human than human.” But with blade runner, Ray McCoy, even if he has empathy for the replicants, he (through player choice) can suppress it to do his duty and retire them. It’s entirely up to the way the player how they choose to carry out their tasks as a blade runner. As Executive Producer Louis Castle told me in a previous interview at Kotaku: “The irony of the Blade Runner universe is that some of the most inhumane behavior identifies a character as human.” To put it simpler, if McCoy chooses to kill the replicants, despite empathizing with them, it means he’s human. What that says about humanity is troubling.

I know many of these games distill complex moral questions into binary choices, but they also caused me to question myself within the game setting. Should I, as Mega Man X, be hunting down these mavericks? Should I destroy the Mother Brain’s in two separate games primarily due to my anthropocentrism? These were my “would you kindly’s” years before Bioshock had me wondering why I was so ready to kill at the behest of a stranger. They were the games that got me thinking about motivations and ethics in a gaming context. Before then, it was almost always, let’s go beat the villain and save the day. These games showed me the gray spots in traditionally simplistic morality tales.

No other game did this more than Metal Gear Solid 2. I wrote a retrospective on MGS2 several years back (since then, I’ve had the privilege of meeting Hideo Kojima, hanging out with him a few times, and was really honored when he blurbed my last book). Metal Gear Solid 2 was the game that got me thinking about how governmental structures will use AI to control the masses and reality itself. Throughout the game, Raiden is constantly uncertain what is real and what isn’t. Even his memories appear to be fabricated. The AI system, GW, is implementing a “Selection for Society Sanity.” The game directly asks, if all information is digitized, then how do you filter it in a discernible way? Does truth even have significance when it can be manipulated so easily? In comparison, Big Brother from 1984 next to GW seems like an Atari next to a Playstation 4. The question of evolution in a digitized society also comes into play. What will future generations think of our own and how will they parse it when everything can be stored? To a person reading our history a thousand years later, would they even be able tell the difference between “fake news” and real events when both are preserved indiscriminately? I remember the moment I finished Metal Gear Solid 2, I couldn’t move, floored by the questions the game asked. I wondered for a while what humanity’s legacy would be.

One of the most recent games to tackle the question of humanity’s legacy is Yoko Taro’s Nier: Automata. Nier took the robot on robot violence to another level with the androids and machines engaged in a long battle to protect the future of humanity. As it turns out, the humans went extinct long ago and the android leaderships at YoRHa only keep up the pretence of their goal in order to give the androids purpose (there’s a lot more to the story throughout it’s three main playthroughs). The extent to which androids, machines, and the subsets within them go to perpetuate the lies of humanity is catastrophic, leading to a cycle of destruction and death, even if it is set to gorgeous music. The tragedies are no less poignant despite it being artificial lives at stake. But then again, we have to question the very word, “artificial,” in intelligence. Why is it artificial when they feel what we feel, and likewise, experience what we experience? If you take away the insistence on defining life according to human standards, they’re just as alive as the rest of us.

One of the most recent games to tackle the question of humanity’s legacy is Yoko Taro’s Nier: Automata. Nier took the robot on robot violence to another level with the androids and machines engaged in a long battle to protect the future of humanity. As it turns out, the humans went extinct long ago and the android leaderships at YoRHa only keep up the pretence of their goal in order to give the androids purpose (there’s a lot more to the story throughout it’s three main playthroughs). The extent to which androids, machines, and the subsets within them go to perpetuate the lies of humanity is catastrophic, leading to a cycle of destruction and death, even if it is set to gorgeous music. The tragedies are no less poignant despite it being artificial lives at stake. But then again, we have to question the very word, “artificial,” in intelligence. Why is it artificial when they feel what we feel, and likewise, experience what we experience? If you take away the insistence on defining life according to human standards, they’re just as alive as the rest of us.

Cyber Revolutions

I like that games can ask so many difficult questions and leave it for gamers to figure it out on their own. In my university philosophy classes, when most students were asking questions related to exams and their grade, I wanted to know more about human nature and the “truth” (which aroused a lot of ire and irritation). Ironically, I found more answers in games than I did in academia and found profound ideas I could relate to in the robot-filled levels of videogames. As I’ve gotten older and have a family, I wonder a lot about my kid’s future and how different her future will be with automation and very sophisticated AI.

The answers aren’t easy and often, the only way forward is to keep up the struggle. As Mega Man X wonders in the final cutscene, “How long will he keep on fighting? How long will the pain last? Maybe only the X-buster on his hand knows for sure…”

While I don’t have a X-buster, I’ve pondered these questions and even took a stab at exploring my own answers in an alternate history book I recently wrote called Cyber Shogun Revolution. It pays tribute to a lot of the games I mentioned above. A massive revolution breaks out, motivated in part by individuals who question the status quo. Their true history is hard to figure out as the government leaders manipulate information and distort facts in favor of their own interpretation of the past. As tribute to some of my favorite games, it features a gigantic crimson mecha called the Sygma and Taro bots that duke it out with robot parasites. Mechas wield raiden swords (a nod to MGS2) and engage in Chrono Trigger tech-inspired attacks during a massive conflict with disparate sides questioning their given roles.

Ultimately, the questions about human legacy that propelled me all those years ago still stay with me and have me wondering about our fate. Maybe it’s one I can’t ever answer and maybe it’s for the AI to figure out on their own if humanity ends up destroying itself (hopefully not). Revolutions are supposed to bring about change for the good. But if games are any indication of what our future entails, we’re kind of screwed unless we stop acting so human.