Discord, the only way young people communicate via voice after we collectively agreed to stop answering phone calls several years ago, is trying to be more open about how it handles harassment, threats, doxxing, and other forms of abuse on its platform. In order to do this, it plans to release regular “transparency reports,” the first of which is available to peruse now.

The idea, says Discord, is to keep people clued into the decision-making underlying the 250-million-user chat service so that people can understand why things do and, in some cases, don’t get done.

“We believe that publishing a Transparency Report is an important part of our accountability to you—it’s kind of like a city’s crime statistics,” said the company. “We made it our goal not only to provide the numbers for you to see, but also to walk you through some obstacles that we face every day so that you can understand why we make the decisions we do and what the results of those decisions are.”

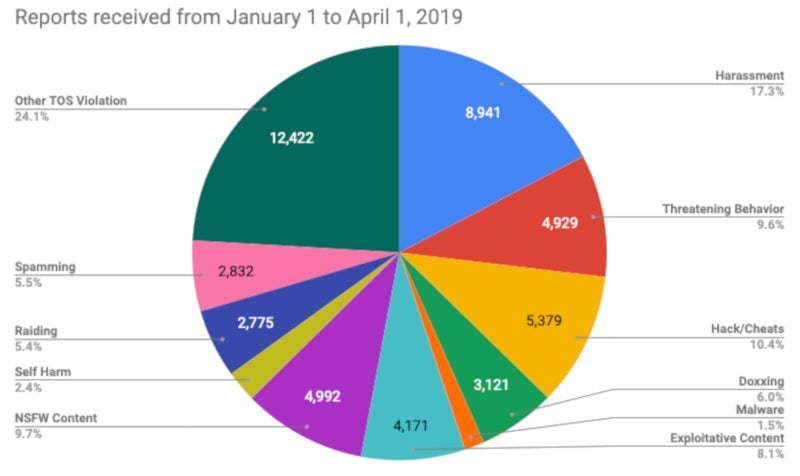

The numbers are fascinating, albeit not wholly unexpected if you display symptoms of being Too Online. First, a graph of reports received by Discord from the start of the year until April:

“Other” is the most reported category, but harassment comes in behind it at 17.3 percent. Significant pieces of the multi-colored pie also go to hacks/cheats, threatening behavior, NSFW content, exploitative content, doxxing, spamming, raiding, malware, and self-harm. Discord does not mince words in describing what these categories refer to. Exploitative content is defined as “A user discovers their intimate photos are being shared without their consent; two minors’ flirting leads to trading intimate images with each other” while one example of NSFW content is “A user joins a server and proceeds to DM bloody and graphic violent images (gore) to other server members.”

“Other” is the most reported category, but harassment comes in behind it at 17.3 percent. Significant pieces of the multi-colored pie also go to hacks/cheats, threatening behavior, NSFW content, exploitative content, doxxing, spamming, raiding, malware, and self-harm. Discord does not mince words in describing what these categories refer to. Exploitative content is defined as “A user discovers their intimate photos are being shared without their consent; two minors’ flirting leads to trading intimate images with each other” while one example of NSFW content is “A user joins a server and proceeds to DM bloody and graphic violent images (gore) to other server members.”

After Discord receives a report, the company’s trust and safety team “acts as detectives, looking through the available evidence and gathering as much information as possible.” Initially, they focus on reported messages, but investigations can expand into entire servers “dedicated to bad behavior” or historical patterns of rule-breaking. “We spend a lot of time here because we believe the context in which something is posted is important and can change the meaning entirely (like whether something’s said in jest, or is just plain harassment),” wrote Discord.

If there’s a violation, the team then takes action, which can mean anything from removing the content in question to removing a whole server from Discord. However, the percentage of reports that caused Discord to spring into action earlier this year was relatively small. Just 12.49 percent of harassment reports got actioned, for example. Other categories saw Discord intervene more often, but most percentages were still relatively small: 33.17 percent for threatening behavior, 6.86 percent for self-harm, 44.34 percent for cheats/hacks, 41.72 percent for doxxing, 60.15 percent for malware, 14.74 percent for exploitative content, 58.09 percent for NSFW content, 28.72 percent for raiding, and the big outlier, 95.09 percent for spam.

Discord explained that action percentages are lower than you might expect because many reports simply don’t pass muster. Some are false or malicious, with people taking words out of context or banding together to report innocent users. Others demand too much for too little. “We may receive a harassment report about a user who said to another user, ‘I hate your dog,’ and the reporter wants the other user banned,” said the company. Other reports that Discord doesn’t action might include information, but no concrete evidence. Lastly, users sometimes apply the wrong category to reports, but Discord says it still actions those, but it may not count toward action percentages.

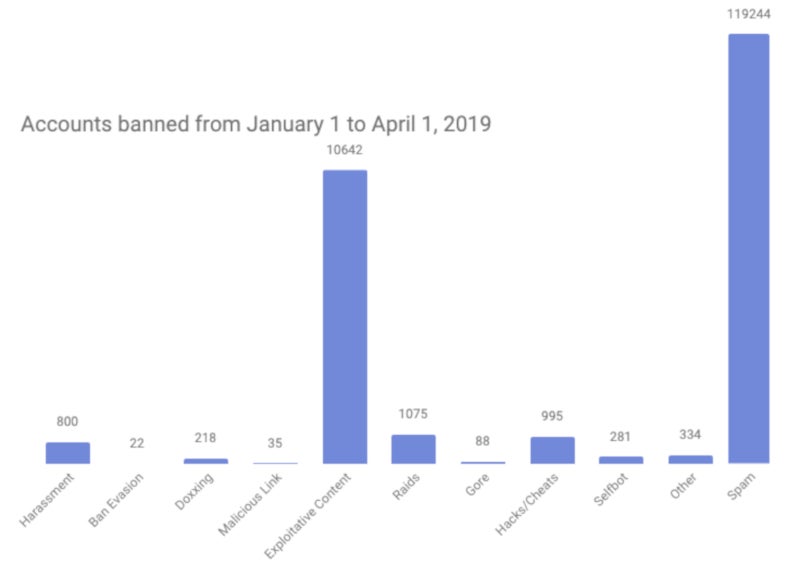

From January to April, the biggest contributors to bans were spam and exploitative content. Spam accounted for 89 percent of account bans—a total of 119,244 accounts. On the exploitative content side of things, Discord banned 10,642 accounts, and it says it’s doing its best to squelch that issue—perhaps due to its well-documented troubles with child porn in the past.

“We’ve been spending significant resources on proactively handling Exploitative Content, which encompasses non-consensual pornography (revenge porn/’deep fakes’) and any content that falls under child safety (which includes child sexual abuse material, lolicon, minors accessing inappropriate material, and more),” the company wrote. “We think that it is important to take a very strong stance against this content, and while only some four thousand reports of this behavior were made to us, we took action on tens of thousands of users and thousands of servers that were involved in some form of this activity.”

Discord also removed thousands of servers during the first few months of the year, mostly focusing on hacks/cheats. However, servers focused on hate speech, harassment, and “dangerous ideologies”—again, an area where Discord has struggled in the past—are also a big focus. On a related note, the company also discussed its response to videos and memes born of the March 14 Christchurch shooting.

Discord also removed thousands of servers during the first few months of the year, mostly focusing on hacks/cheats. However, servers focused on hate speech, harassment, and “dangerous ideologies”—again, an area where Discord has struggled in the past—are also a big focus. On a related note, the company also discussed its response to videos and memes born of the March 14 Christchurch shooting.

“At first, our primary goal was removing the graphic video as quickly as possible, wherever users may have shared it,” the company said. “Although we received fewer reports about the video in the following hours, we saw an increase in reports of ‘affiliated content.’ We took aggressive action to remove users glorifying the attack and impersonating the shooter; we took action on servers dedicated to dissecting the shooter’s manifesto, servers in support of the shooter’s agenda, and even memes that were made and distributed around the shooting… Over the course of the first ten days after this horrific event, we received a few hundred reports about content related to the shooting and issued 1,397 account bans alongside 159 server removals for related violations.”

Discord closed out the report by saying it believes that this sort of transparency should be “a norm” among tech companies, so that people can “better determine how platforms keep their users safe.” The Anti-Defamation League, an anti-hate organization dedicated to fighting bigotry and defending civil rights, agrees.

“Discord’s first transparency report is a meaningful step toward real tech platform transparency, which other platforms can learn from,” the organization wrote in a statement about Discord’s report. “We look forward to collaborating with them to further expand their transparency efforts, so that the public, government and civil society can better understand the nature and workings of the online platforms that are and will continue to shape our society.”